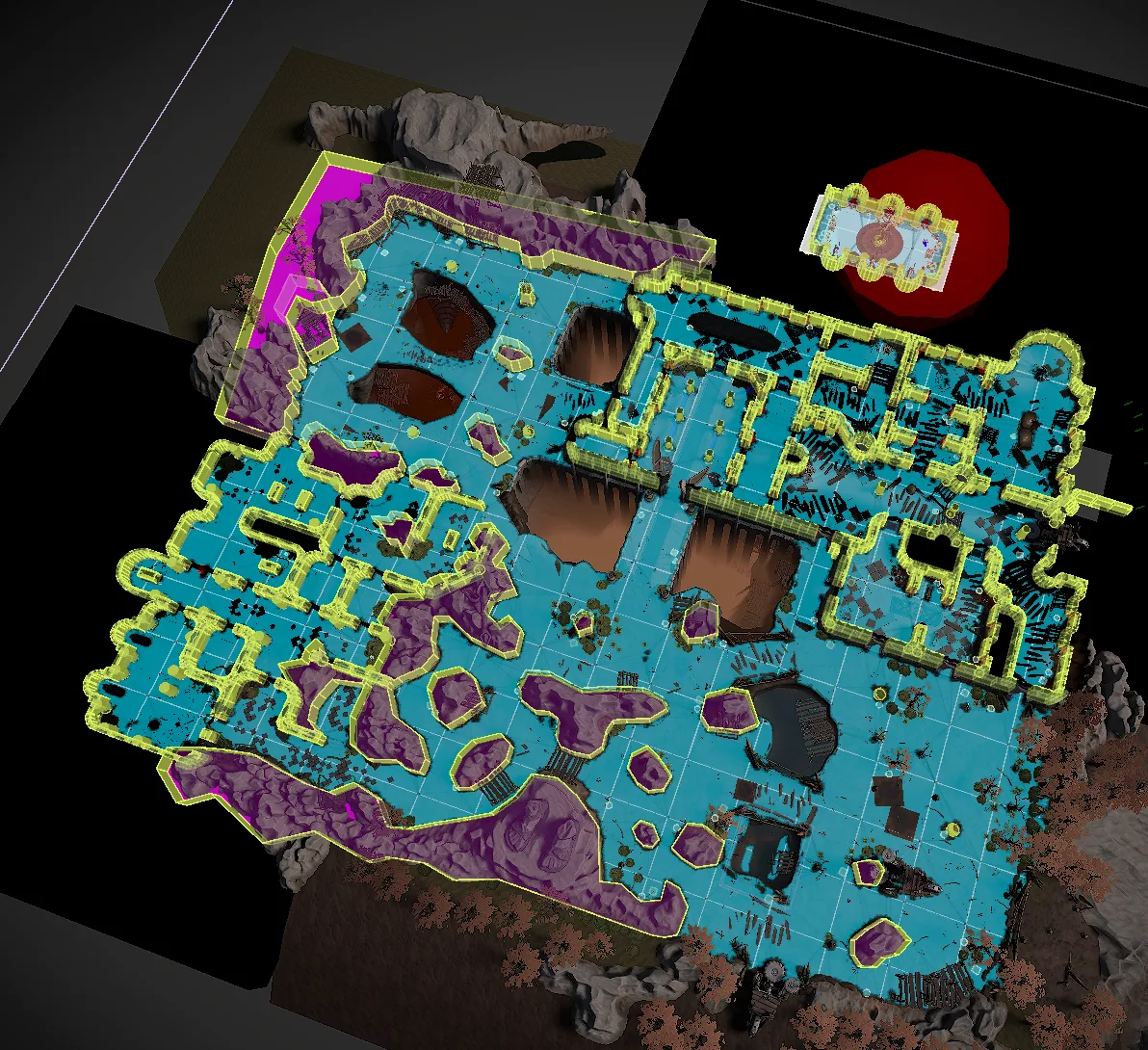

The raw mask is too crunchy, so the renderer runs a small separable blur. I kept it restrained. It cleans up aliasing, but corners still read as corners. Pretty fog that makes a dungeon harder to read is a bad trade.

After the blur pass, the renderer binds the result as a global shader texture:

WarFogRenderer.cscsharp

Shader.SetGlobalTexture(WarFogBufferSample, m_BlurPass);

Shader.SetGlobalFloat(WarFogScale, transform.lossyScale.x);

Shader.SetGlobalVector(WarFogPosition, new Vector4(position.x, 0, position.z, 0));

Any shader with world position can sample the same fog value. The main fog plane uses it, but custom lighting, VFX, decals, and object materials can read it too. That was the part I cared about most. The visibility answer exists once, then the rest of the game can reuse it.

There was also a CPU-side sampling path. `WarFogSampler` could ask the GPU whether a point was in fog, then update `InFog` through async readback. `WarFogHider` and UnityEvent hooks could use that for enemies, health bars, minimap icons, or reveal effects.

I would call that part a hook, not a finished production feature. In the checked-in renderer, the sampler update calls are commented out in the main loop. The shape is there, but it still needed decisions about sampling rate, latency, and which systems actually deserved CPU side fog state.