01Overview

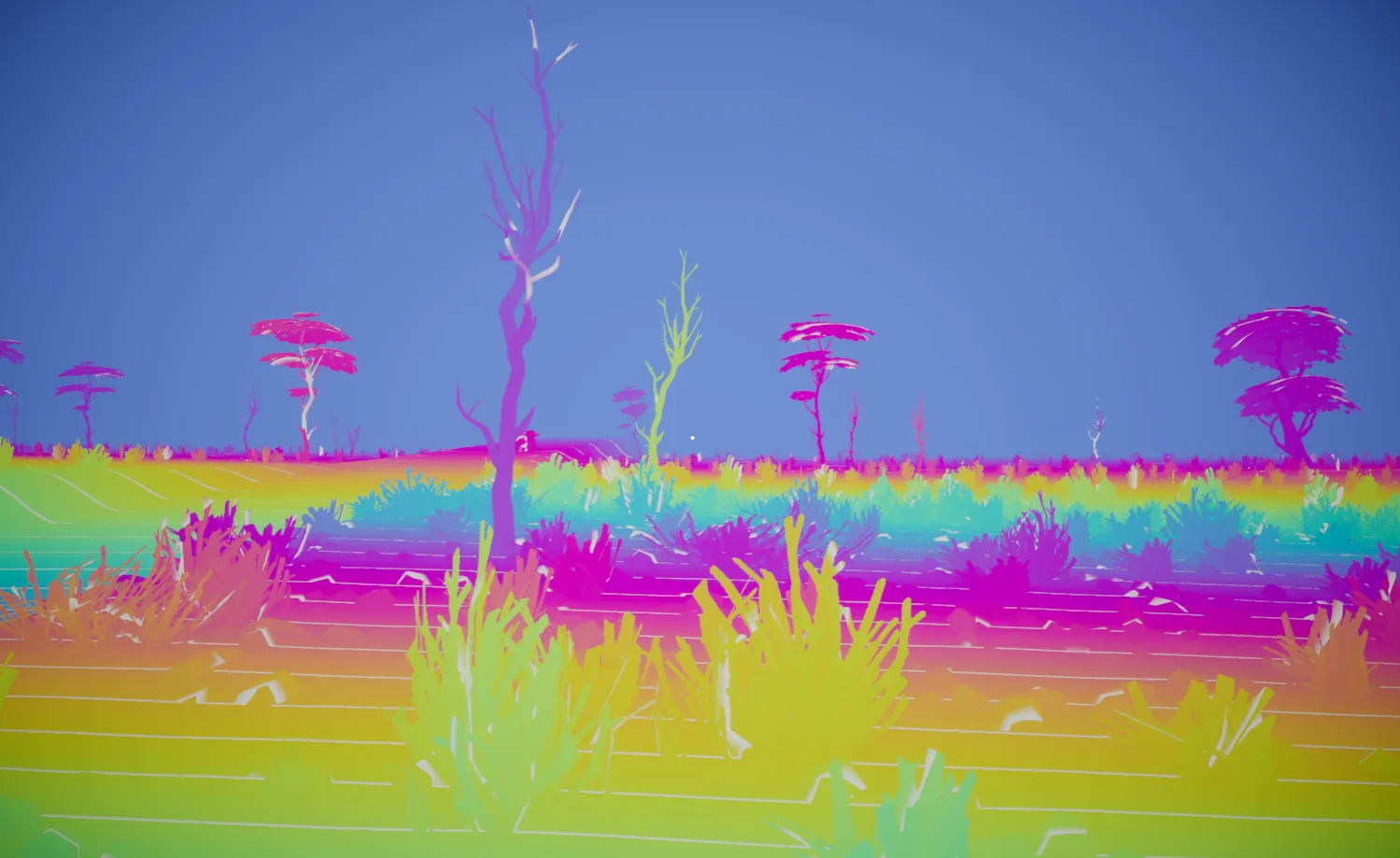

I built this fog renderer for an unannounced project because the built in options were not giving me the control I wanted. I needed fog that could react to the sky, local lights, headlights, weather, and transparent materials without turning into a full-screen blur pass.

The system uses a froxel volume: a camera-aligned 3D grid that stores density, scattering, and transmittance through the view frustum. Each frame, the renderer fills that volume, lights it, integrates it from front to back, and composites it over the scene.

The pass order looks like this:

Noise Bake Inject Density Scatter Lighting Temporal Reprojection Spatial Filter Integrate Composite Update Opaque Texture

That last step matters more than it sounds. The fogged color gets copied back into `_CameraOpaqueTexture`, so refractive shaders sample a scene that already has atmosphere in it.